With Improved robots.txt you get world most advanced, optimised and Magento specific robots.txt for you store! In improved robots.txt we provide all our experience in Magnto development and SEO for you can easy get best basis for you Magento SEO!

Made in Germany

Made in Germany  easy composer installation

easy composer installation

Improved Import PWA Ready

Improved Import PWA Ready

PHP 8.4 compatible

PHP 8.4 compatible

Extension compatible with all recent versions of Magento 2.4.5 Open Source (Community), Adobe Commerce (Enterprise), Cloud Edition include B2B & Omnichannel!

NOTE: Magento 2 versions 2.1, 2.2, and 2.3 no longer receive updates by Adobe Magento

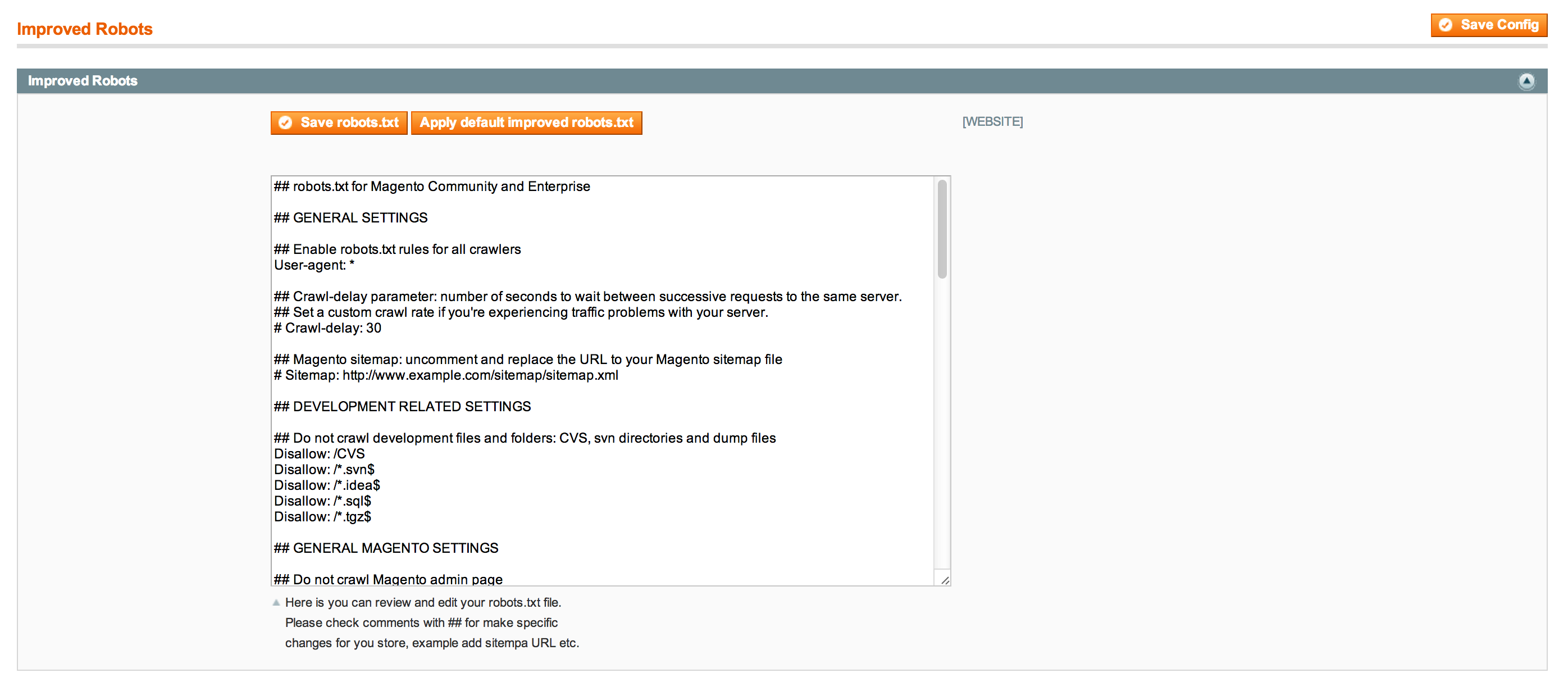

Correct robots.txt for Magento is a very important basic feature for right Search Engine Optimisation (SEO) of your Magento e-commerce store. Robots.txt file is situated in site root and has guidelines for search engines bots/robots how index website, what pages to indexed and what - not to index. Magento is a very complicated system and has a lot of folders and URL structures, so that’s why it is not so easy to create correct robot.txt for the web-store. You always have to remember not to lose any important pages and in the same time not to add trash pages to search engine index. Improved robots.txt is your most simple and reliable tool for building correct SEO for your Magento store and pushing only the right pages of your store to search engines! With Improved robots.txt you get most advanced, optimised and Magento specific robots.txt for you store! In improved robots.txt we provide all our experience in Magento development and SEO so you can easy get best basis for you store SEO according to the best practices!